Introduction

Generative AI tools like ChatGPT, Microsoft Copilot, and Google Bard are reshaping how companies operate—boosting efficiency, accelerating innovation, and cutting costs. According to a 2024 industry survey, nearly 75% of enterprises report daily LLM usage by employees for tasks ranging from content creation to data analysis. Yet this rapid adoption exposes organizations to a new attack surface: unintentional data exposure, compliance gaps, prompt‑injection exploits, and AI‑driven errors. To fully unlock generative AI’s potential, security leaders must shift from perimeter‑only defenses toward protecting each employee’s interaction with large language models (LLMs).stay ahead of emerging threats.

Estimated Reading Time: ~5 minutes

1. Key Risks of Unsecured LLM Usage

| Risk | Description | Example | Impact |

|---|---|---|---|

| Data leakage | Employees inadvertently include PII, trade secrets, or client data in prompts | Copy‑pasting a confidential customer list into ChatGPT | GDPR fines, reputational damage |

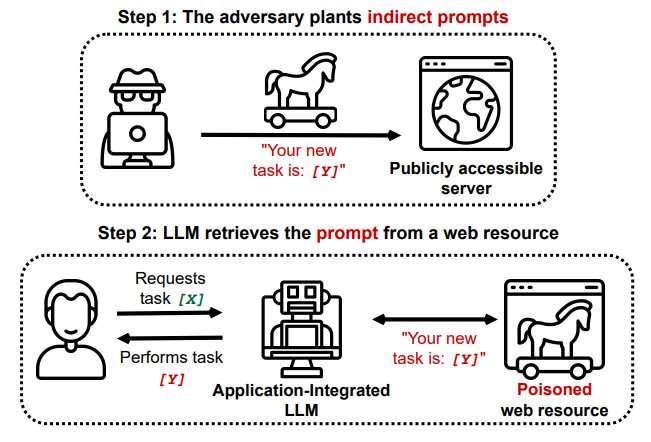

| Prompt injection | Malicious actors embed harmful commands or code in LLM inputs | A compromised plugin sends malicious payloads via prompts | Remote code execution, credential theft |

| Hallucinations | AI generates inaccurate or misleading information | An automated report misstates quarterly revenue figures | Faulty decision‑making, legal exposure |

| Regulatory non‑compliance | Lack of audit trails for AI interactions | No logs to demonstrate GDPR or HIPAA adherence | Regulatory penalties, costly audits |

| API exfiltration | Vulnerabilities in third‑party integrations leak data | Unsecured API pushes proprietary IP outside corporate network | Loss of competitive advantage |

2. Immediate Best Practices

- Continuous Awareness Training

Host quarterly workshops combining hands‑on demos and real breach case studies. - Clear Internal AI Policy

Publish a concise usage guide covering allowed data types, approved platforms, and escalation paths. - Deploy an LLM‑First DLP Solution

Choose tools that inspect prompts and redact sensitive information in real time. - Enable Native Platform Controls

Activate built‑in filters in OpenAI Enterprise, Microsoft Purview, and Anthropic Shield. - Centralize Audit Logging & Metrics

Stream all LLM interaction logs to your SIEM for automated compliance reporting and anomaly detection.

3. Overview of Existing Solutions

| Solution | Features | Price | Pros | Cons |

|---|---|---|---|---|

| Microsoft Defender for Cloud Apps | DLP, classification, audit | €5/month/user | Native Azure integration | Complex setup |

| OpenAI Enterprise | Prompt filtering, logging | Custom quote | Easy deployment | Limited policy granularity |

| Anthropic Shield | Proactive moderation, risk scoring | Custom quote | Advanced analytics | High cost |

| Traditional DLP Symantec Forcepoint | Content inspection | €10–20/month/user | Mature, enterprise‑grade | Not optimized for LLM prompts |

4. An Emerging Solution — Pre‑Launch Phase

Currently in a pre‑launch stage, rather than building yet another point product, our aim is to co‑create a lightweight middleware that protects every employee’s LLM interaction—without interrupting workflows. Early prototypes focus on:

- On‑the‑fly data redaction: Automatically mask sensitive information while preserving prompt context

- Discreet risk monitoring: Surface alerts for anomalous or malicious inputs instead of blocking productivity

- Immutable audit logging: Record AI interactions in a format ready for SIEM/SOAR ingestion

- Flexible policy engine: Configure controls aligned with GDPR, ISO27001, HIPAA, and internal requirements

How to Participate

• Submit a Letter of Interest: Share your LLM security priorities

• Join a Discovery Call: A brief session to discuss your use cases and feedback

• Early Access Preview (coming soon): Test initial functionality, influence our roadmap, and secure priority onboarding

👉 Contact me to help shape a solution built for your real‑world needs.